The demo phase of agentic AI is over. By the first quarter of 2026, enterprises across financial services, legal, supply chain, and software engineering are running agentic systems in production — not in sandboxes, not in proofs-of-concept, but in live workflows where errors carry real cost. The results are instructive: some deployments are delivering measurable productivity gains; a larger number are quietly stalling between pilot approval and production rollout. The gap between "our agent impressed in the demo" and "our agent is reliably handling 10,000 decisions a week" is wider than most organizations anticipated when they signed off on the initial budget.

That gap is not primarily a technology problem. The models have matured significantly. The tooling has improved. What has not matured is enterprise understanding of what makes an agentic system production-ready: robust tool interfaces, disciplined state management, appropriate human oversight, and — critically — the evaluation infrastructure to know when an agent is silently wrong. Organizations that have solved these challenges are seeing compounding advantages. Those still iterating in the demo environment are burning time they cannot recover.

This post is a practitioner's assessment of where things actually stand in mid-2026: what agentic AI really means as a system architecture (not a marketing category), which enterprise verticals are producing the most credible production results, why most pilots still fail to ship, and what a rigorous implementation roadmap looks like. The data and examples draw on publicly reported deployments, published industry analyses, and patterns observable across early-adopter engineering organisations.

The organizations winning with agentic AI in 2026 are not the ones with the best models — they are the ones that engineered production-grade systems around those models: reliable tool interfaces, auditable decision chains, and governance frameworks that can keep pace with autonomous action.

Agentic AI systems are moving from experimental tools to core enterprise infrastructure in 2026

What "Agentic AI" Actually Means in 2026

The term "agentic AI" is being applied to systems with very different capabilities, and the conflation is causing real harm to enterprise planning. A chatbot that answers questions is not an agent. A workflow automation that calls an API on a fixed trigger is not an agent. An agent is a system that perceives its environment, plans a course of action to achieve a goal, takes actions that affect the environment, reflects on the results, and iterates — in a loop, without requiring human input at each step.

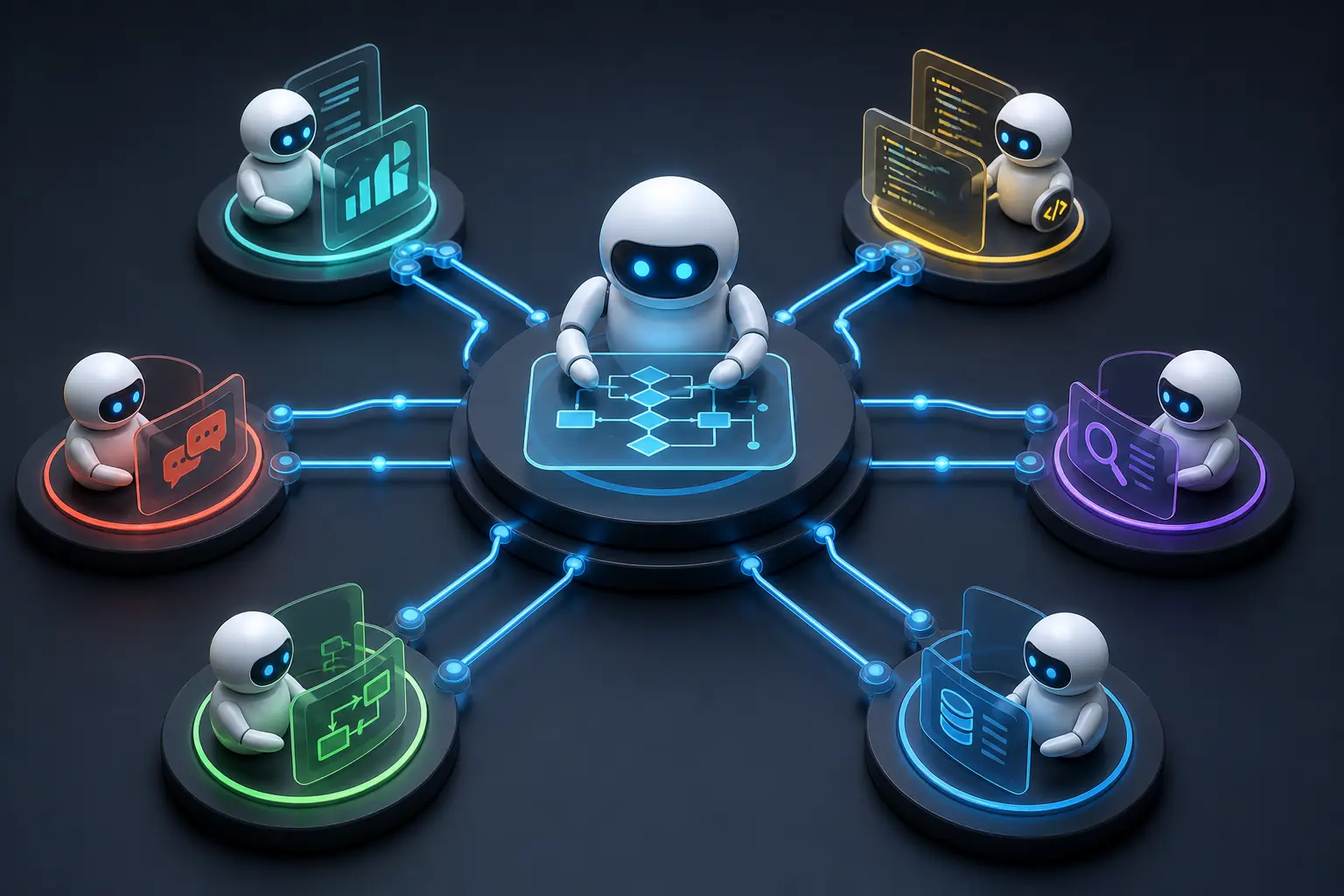

The distinction between a single-agent system and a multi-agent orchestration system matters even more. A single agent — one model, one tool set, one conversation thread — can handle reasonably complex tasks within a limited scope. But a multi-agent architecture introduces a planner that breaks problems into subtasks, specialist agents that execute those subtasks in parallel or sequence, and critic agents that validate outputs before they affect downstream systems. This architecture is what is producing the most significant enterprise results in 2026. It is also substantially more difficult to build, test, and govern.

The distinction between genuine agency and scripted task automation is equally important. An RPA robot filling out a form is automating a task. An agentic system reading an ambiguous supplier contract, deciding which clauses require escalation, drafting a summary, routing it to the correct legal reviewer, and then following up if no response arrives within 48 hours — that is goal-directed, adaptive behaviour. The execution loop looks like this:

This loop is what separates genuine agentic AI from sophisticated automation. And it is this loop — particularly the reflect and iterate steps — that most enterprise integrations underestimate.

Where Agentic AI Is Actually Working

Production deployments are clustering in a handful of enterprise verticals where the task structure is well-defined, the tool interfaces are accessible, and the cost of errors is tolerable with appropriate guardrails. These are not the verticals with the most ambitious agentic visions — they are the ones with the operational discipline to ship.

Software Development and Engineering

This is the most mature deployment domain. Tools like Anthropic's Claude Code, GitHub Copilot Workspace, and a growing ecosystem of agentic coding assistants are handling autonomous PR review, test generation, bug triage, and — in early-adopter engineering organisations — full implementation of well-scoped feature requests. The numbers from organisations that have committed to agentic-first development workflows are significant: 30–40% of routine code changes in these teams are now handled end-to-end by agentic systems, with human review focused on architectural decisions and edge cases rather than boilerplate implementation.

The key enabling factor in this domain is that the tool interfaces are reliable. A coding agent can read files, run tests, check diffs, and commit code with high confidence that the environment will respond predictably. This is a condition that most other enterprise domains have not yet achieved.

Financial Services Operations

Loan processing, compliance document review, and reconciliation workflows are yielding consistent results. Major banks and fintech organisations report 40–60% reduction in processing time for structured document workflows — mortgage applications, KYC packages, trade confirmations — when agentic systems handle the initial pass. Error rates on data extraction tasks are running below human benchmarks in controlled comparisons, primarily because agents do not have the cognitive fatigue issues that affect human reviewers on high-volume repetitive tasks.

The important nuance: these gains are in first-pass processing. The final decision on a loan or a regulatory filing still has a human in the chain. The agent handles the 80% of the work that is predictable; the human handles the exceptions that require judgment. This human-in-the-loop design is, in financial services, partly a regulatory requirement — and it turns out to also be where the productivity gains are most sustainable.

Legal and Contract Intelligence

Multi-agent contract review is producing the most dramatic time savings of any documented agentic deployment. Leading law firms and legal operations teams report 60–80% reduction in first-pass review time on standard commercial contracts when agentic systems handle clause identification, risk flagging, deviation from standard positions, and summary generation. The workflow typically uses a planner agent to route different contract types to specialist agents, with a summarisation agent producing the final review pack for a human lawyer.

Due diligence in M&A transactions — historically one of the most labour-intensive legal tasks — is being substantially accelerated. Agentic systems can process hundreds of documents in the time a human team would take to review tens, with consistent application of the review criteria.

Supply Chain and Procurement

Autonomous vendor qualification, purchase order matching, and exception handling are areas where agentic AI is replacing workflows that previously required significant human attention. Agents that can cross-reference supplier data against compliance databases, check PO terms against contract commitments, and flag discrepancies for human review are reducing procurement processing costs by 30–50% in early deployments. The agentic advantage here is the ability to handle the long tail of exceptions — the situations that rule-based automation could not accommodate — without defaulting everything to a human queue.

Customer Operations

The shift from chatbots to agentic customer operations is material. The difference is action capability: a chatbot tells a customer what to do; an agentic system does it. Agents that can look up account status, process a refund, update a shipping address, escalate a complaint, and send a confirmation — without a human in the loop for routine cases — are reducing cost-per-resolution and improving customer satisfaction scores simultaneously. Organisations with mature deployments report 70–80% containment rates (cases fully resolved by the agent without human handoff) on standard service workflows.

Multi-agent orchestration — where a planner agent coordinates specialist agents — is the architecture pattern now proving itself in production

Why Most Agentic Pilots Fail

The 60–70% pilot failure rate is not primarily a model quality problem. The models available in 2026 — from Anthropic, OpenAI, Google, and others — are capable of handling the reasoning demands of most enterprise agentic workflows. The failures are almost universally infrastructure, design, and evaluation failures. They are fixable, but only if organisations are honest about where the problems are.

Tool Reliability Gap

An agent is only as good as the tools it can call. Most enterprise systems — CRMs, ERPs, document management platforms, internal APIs — were not designed for agent consumption. They assume human users who can handle ambiguous responses, recover from timeouts, interpret error messages, and retry intelligently. Agents operating against these systems encounter unexpected response formats, rate limits, authentication failures, and silent errors at a rate that makes multi-step workflows unreliable. A pilot that works when a developer is watching — and manually clearing errors — fails in production. The fix requires instrumenting tool interfaces for agent reliability: structured responses, explicit error codes, retry semantics, and idempotency. This is unglamorous engineering work that most pilot teams skip.

Context Window Limitations in Long Workflows

Agentic systems working through multi-step workflows accumulate context rapidly. A contract review agent reading 40 documents, a customer service agent handling a complaint that spans six prior interactions, a procurement agent cross-referencing multiple supplier databases — all of these generate context that exceeds what a single model invocation can hold. Most pilot teams discover this boundary when their agent starts losing track of earlier steps or making decisions inconsistent with prior actions. Production systems require explicit state management: external memory stores, structured handoff formats, and context summarisation strategies. Pilots that treat the model's context window as an infinite scratchpad fail at scale.

Error Propagation in Multi-Step Chains

This is the failure mode that surprises organisations most, because it doesn't show up in demos. Consider a 10-step agentic workflow where each step has a 95% accuracy rate — individually impressive. Across 10 steps, the probability of at least one error is roughly 40%. In a multi-agent pipeline with 15 steps, you're looking at a majority of runs encountering at least one error. If those errors are not caught and handled at each step, they propagate and compound. A small misclassification in step 3 becomes a wrong routing decision in step 6, which becomes an incorrect action in step 9. The end result looks like an agent failure, but the root cause is a missing error-handling layer three steps earlier.

Human-in-the-Loop Design Failure

Pilots fail at two extremes. Some organisations, afraid of autonomous errors, insert humans at every decision point — creating a workflow that is slower and more expensive than the manual process it was meant to replace, because now humans are reviewing agent outputs rather than doing the work directly. Other organisations, eager to demonstrate full autonomy, remove humans from the loop entirely — creating systems that make consequential errors without any mechanism to catch them. The design pattern that works in production is selective human-in-the-loop: human checkpoints at high-stakes decision nodes, with full autonomy for routine steps. Designing these thresholds correctly requires understanding which decisions carry irreversible consequences.

Evaluation Gap

The most dangerous failure mode is the agent that appears to be working but is silently wrong. In a demo, a developer watches the agent complete a task and validates the result. In production, with the agent processing thousands of items, there is no one watching each one. Most pilot teams can show that the agent works in the cases they tested. Few have built the infrastructure to measure accuracy systematically, detect degradation over time, and identify the classes of inputs where the agent underperforms. Without evaluation infrastructure, production deployment is a gamble.

💡 The Most Important Insight on Pilot Failure

Most agentic pilots fail not because the AI is inadequate but because the surrounding system — tool interfaces, state management, error handling, human escalation paths, and evaluation — was never built to production standard. The pilot succeeded because a developer was compensating for all of these gaps in real time. Removing that developer reveals the gaps. Build the surrounding system first; the model is the easy part.

Multi-Agent Orchestration — The Architecture That's Actually Working

The shift from single-agent to orchestrated multi-agent systems is the most significant architectural development in enterprise AI deployment in the first half of 2026. Single-agent systems have a fundamental constraint: one model, one context, one execution thread. Complex enterprise workflows require parallelism, specialisation, and validation — and those require multiple agents working in coordination.

The architecture that is proving itself in production uses four distinct agent roles. A planner agent receives the high-level goal, decomposes it into sub-tasks, and routes work to specialist agents. Executor agents are specialists: optimised for specific tools, document types, or action domains. They receive narrow, well-defined tasks and return structured outputs. A critic or validator agent reviews the outputs of executor agents before they are accepted — catching errors, flagging ambiguities, and returning work for retry when outputs do not meet defined quality criteria. Finally, a memory and state management layer (often not an agent itself but a structured external store) maintains the workflow state, making it durable across model invocations and recoverable from partial failures.

Emerging orchestration frameworks — including LangGraph, CrewAI, and Anthropic's agent tooling — are providing foundational infrastructure for this pattern. The key engineering principle is that each agent boundary is a quality gate: outputs must meet a defined standard before passing downstream. This transforms error propagation from a silent accumulation problem into an explicit, managed failure mode.

Physical AI — Agentic Systems Leaving the Screen

May 2026 marks a distinct inflection point: agentic AI is no longer only a software phenomenon. Physical AI — systems that perceive the physical environment and take actions in it — is entering real enterprise deployment. This shifts the stakes of agentic failure in ways that software-only deployments do not.

NVIDIA's Isaac GR00T open models, released to the research community in early 2026, represent the most significant open-source contribution to physical AI to date. GR00T provides pre-trained foundation models for humanoid robots, enabling them to learn new tasks from human demonstrations rather than from thousands of hours of programmed instructions. Paired with Isaac Sim 6.0 — NVIDIA's physics simulation environment that now supports photorealistic rendering of real-world environments — organisations can train and validate robot behaviours in simulation before physical deployment. The sim-to-real transfer gap, historically a major obstacle in robotics, is narrowing.

The enabling technology is Vision-Language-Action (VLA) models: systems that take visual input (camera feeds), combine it with language instructions ("pick up the red component and place it in bay 7"), and output motor commands. VLA models are enabling robots to operate in unstructured environments — factory floors with irregular layouts, hospital corridors, warehouse picking areas — that traditional industrial automation could not handle. This is categorically different from the fixed-program robots that have operated in structured manufacturing environments for decades.

NVIDIA's Isaac for Healthcare initiative, including the Rheo blueprint and deployments with health systems including Advent Health, is bringing physical AI into hospital logistics and patient support workflows. Robots navigating hospital floors autonomously, delivering supplies, supporting patient room preparation, and operating in environments that shift daily are moving from pilot to operational deployment. The governance and safety requirements in healthcare make this a particularly demanding test — and the fact that it is proceeding speaks to the maturity the underlying technology has reached.

Vision-Language-Action models are enabling robots to operate in unstructured environments — the physical frontier of agentic AI

Governance — The Problem Nobody Has Solved

The honest assessment of enterprise agentic AI governance in mid-2026 is this: most organisations have deployed agentic systems faster than their governance frameworks can track. The technology moved quickly; the audit, accountability, and compliance infrastructure has not kept pace. This is not unique to AI — it echoes every major enterprise technology cycle — but the autonomous nature of agentic systems makes the consequences of governance gaps more acute.

The core challenges are well-understood in principle but poorly solved in practice. Agent audit trails are the foundation: every action an agent takes, every tool call it makes, every decision it reaches should be logged in a format that a human reviewer can interrogate. Most agentic frameworks generate some form of logging, but translating that logging into a usable audit trail — one that connects a downstream business outcome to the specific sequence of agent decisions that caused it — requires deliberate design. Most organisations have not done this design work.

Explainability of multi-step decisions is a related challenge. When an agent's 12-step reasoning process produces a decision that a manager wants to review or that a regulator wants to examine, the explanation must be comprehensible to a non-technical audience. Current agentic systems produce outputs and action logs, but translating a chain of model invocations, tool calls, and intermediate states into a plain-language explanation of "why the agent decided X" is an open engineering problem. The organisations that have made the most progress treat explainability as a design requirement from the start — not as a reporting feature added after the fact.

Liability when an agent makes a wrong autonomous decision is the governance question that legal and compliance teams are least prepared for. If an agentic procurement system approves a vendor that subsequently causes a supply chain disruption, or an agentic customer service system communicates incorrect terms to a client, who is responsible? Current regulatory frameworks were not written with autonomous AI agents in mind. Organisations deploying agentic systems in consequential domains need explicit policies — written, reviewed, and tested — on the scope of agent authority, the conditions under which human override is required, and the escalation path when an agent action needs to be undone.

Data handling in agentic loops creates privacy and compliance exposure that traditional software architectures did not. Agents processing documents, querying APIs, and generating outputs may handle personally identifiable information, commercially sensitive data, or regulated financial information across multiple tool calls and model invocations. The data residency, retention, and access control requirements for agentic workflows are meaningfully more complex than for single-step AI inference.

⚠️ The Central Governance Risk

The most dangerous governance gap is not the agent that makes an obvious error — those are caught. It is the agent that makes a systematically biased or subtly wrong decision at scale, over thousands of cases, before anyone notices the pattern. Building detection infrastructure — statistical monitoring of agent outputs, periodic human review of sampled decisions, anomaly alerts — is not optional in production deployment. It is the difference between agentic AI that is auditable and agentic AI that is a liability.

A Practical Deployment Roadmap

Based on the deployment patterns that are producing durable results, a four-phase roadmap for enterprise agentic AI reflects the engineering and governance discipline required to bridge the pilot-to-production gap.

Phase 1: Single-Agent, Narrow Scope, Measurable Task (1–3 Months)

Choose one task where the inputs are well-defined, the success criterion is measurable, the consequences of error are recoverable, and the tool interfaces are reliable. Deploy a single agent with a narrow mandate. Instrument it thoroughly: log every action, measure accuracy against a human baseline on a sample of outputs, and establish what failure looks like before you decide what success looks like. The purpose of Phase 1 is not to demonstrate value — it is to understand the real behaviour of an agentic system operating against your specific enterprise environment. Expect surprises. Treat them as data.

Phase 2: Human-in-the-Loop Integration and Feedback Instrumentation (3–6 Months)

Introduce structured human review at defined decision nodes. Design the review interface so that human feedback is captured in a format that can be used to improve agent behaviour — not just as an approval step, but as a training signal. Build the evaluation infrastructure: automated accuracy scoring, output sampling, degradation detection. This phase is where most organisations discover that their Phase 1 results were better than production will sustain, because Phase 1 had a developer compensating for gaps. Fix the gaps. The human-in-the-loop design you establish here will determine the economics of everything that follows.

Phase 3: Multi-Agent Orchestration for Compound Workflows (6–12 Months)

Introduce the planner-executor-critic architecture for more complex workflows. This requires deliberately engineering agent boundaries: what does each agent receive, what format does it return, what quality threshold must it meet before its output proceeds? Invest in state management and error recovery at each boundary. Run extensive parallel testing — the same workflow handled by the multi-agent system and by humans — and measure not just accuracy but latency, cost per resolution, and error recovery rate. Production readiness requires that the system degrades gracefully, not catastrophically, when something goes wrong.

Phase 4: Autonomous Operation with Governance Layer (12–24 Months)

Full autonomous operation on defined workflow classes, with human involvement limited to exception handling, audit review, and governance oversight. The governance layer — audit trails, explainability outputs, statistical monitoring, anomaly detection — must be operational and reviewed regularly before autonomy is expanded. This phase is not a destination; it is an ongoing operating discipline. The organisations that sustain agentic AI in production treat governance as a continuous function, not a one-time implementation.

The Competitive Divide Forming Now

The concept of the "One-Hour Company" — an organisation that can spin up new operational capacity in hours rather than weeks, using agentic systems to handle the coordination, documentation, and execution work that previously required teams — is moving from theoretical to operational in 2026. It is not universal yet. But the organisations that have committed to agentic AI as a core operational capability are building compounding advantages that are becoming visible in their cost structures, their delivery velocity, and their ability to handle scale without proportional headcount growth.

Software organisations with mature agentic coding workflows are shipping faster, with fewer regression defects, than peers still relying entirely on human engineering throughput. Legal and financial services firms with production agentic review systems are handling transaction volumes that would require significantly larger teams in a purely manual model. The compounding effect matters: organisations that are 18 months ahead in agentic deployment are not just 18 months ahead — they are building evaluation data, governance frameworks, and institutional knowledge that accelerates every subsequent deployment. The learning curve is real, and the organisations that have already climbed it have a meaningful advantage over those starting today.

The window for closing this gap is not unlimited. The organisations still iterating through pilots in 2027 will find that the production infrastructure, governance frameworks, and institutional capability their more advanced competitors have built will be difficult to replicate quickly. This is not a reason for panic — it is a reason for execution discipline. Model selection matters less than engineering discipline. Platform choices matter less than the quality of your evaluation infrastructure. The organisations that understand this are the ones building durable advantages. The ones still waiting for the technology to become simpler are underestimating how much of the work is organisational, not technical.